Entra ID: Azure→Splunk Log Pipeline using Azure Event Hub

- Jv Cyberguard

- Feb 12

- 8 min read

This article is an update to Entra ID Attack & Defense: Building an Azure→Splunk Log Pipeline. We will be migrating our Log Pipeline from Using Microsoft Graph API to configuring diagnostic settings to stream Azure logs to an Event Hub, and then into Splunk.

In reviewing how the current logging pipeline works, I noticed that our log visibility across non-interactive sign-ins were limited. In the Splunk Add on for Microsoft Azure docs it tells us that the sign-in input only returns sign-ins that are interactive in nature. While our attack chains in Entra Goat can be seen clearly in the Audit logs, there are detection opportunities we were not capturing by not being able to see Service Principal sign-ins.

Therefore, I have decided that before beginning Scenario 2, we will stream Entra ID sign-ins to an Event hub and then use the Splunk Add-on for Microsoft Cloud Services to retrieve the Event hub data. To achieve this we will do the following:

Create an Azure Event Hub Namespace & Event Hub. This is where Entra ID diagnostic logs are streamed.

Configure Entra ID Diagnostic Settings - Stream sign-in logs (including non-interactive / SP sign-ins) to the Event Hub.

Assign the Event Hubs Data Receiver Role to My Splunk SP - Give the existing Splunk-log-collector service principal permission to read from the Event Hub.

Configure Splunk to Read from Event Hub - Set up the Splunk Add-on for Microsoft Cloud Services to pull logs from the Event Hub into Splunk.

Validate and Tune in Splunk - Verify we’re seeing SP sign-ins and build searches/dashboards

Let's begin by defining Event hubs. An Event Hub namespace is a logical container in Azure that provides a shared scope for managing, securing, and scaling one or more Event Hubs. An Event Hub is the actual high-throughput ingestion endpoint within that namespace that receives streaming data such as Entra ID sign-in and audit logs so that downstream tools like Splunk can consume them in near real time.

Azure diagnostic settings are the mechanism that tells a service what logs to send, where to send them, and how often to send them. When you enable diagnostic settings on Entra ID, you select specific log categories (such as sign-in logs and audit logs) and define an Event Hub as the destination; from that point forward, Entra ID continuously streams those events into the Event Hub without any polling or manual export. In effect, diagnostic settings act as the bridge between Entra ID and the Event Hub, turning raw identity activity into a live event stream that downstream platforms like Splunk can reliably consume.

The documentation I used to Create the Event hub can be found here.

Create an Azure Event Hub Namespace & Event Hub

If you don't have a resource group go ahead and create one. The link to the documentation above shows you how to do so, as well as, how to approach filling out the below fields.

Once the deployment is complete, you should see a page like below. Click go to the resource.

We are now in our Event hub namespace. From here we can create our Event hub.

When creating the event hub, in the wizard, we can accept the default settings.

The partition count determines how many parallel “lanes” an Event Hub has for processing events; more partitions can support higher throughput, but our Entra ID logs are low volume in a lab, so we chose 1 partition.

The retention time controls how long the Event Hub holds events before automatically deleting them; we set it to 1 hour, which is enough time for Splunk to ingest logs without paying for unnecessary storage.

Finally, the cleanup policy dictates what happens after retention expires — we selected Delete, so the Event Hub simply purges old events once they fall outside the retention window, keeping the environment tidy and inexpensive.

The capture feature in event hub is not available in the basic tier, so we can skip.

The Azure Event Hubs Capture feature automatically captures streaming data that flows through Event Hubs to an Azure Blob Storage or Azure Data Lake Storage account

Therefore, we hit create.

Configure Entra ID Diagnostic Settings

To stream all required Entra ID logs to the Entra Goat event hub, we go to:

Azure portal -> Microsoft Entra ID -> Monitoring -> Diagnostic settings.

On this page we see that we can configure the collection of the data in the bullet list.

If you remember from part 1 of the Azure to Splunk log Ingestion Pipeline, Audit logs are already being ingested via Microsoft Graph using the Splunk Add-on for Microsoft Azure. Since Azure Diagnostic Settings would result in duplicate ingestion and additional cost, only sign-in logs that are not available via the Graph API—namely service principal, non-interactive sign-ins, and Microsoft Graph Activity Logs—were streamed through Event Hub.

You should now see it here.

Assign the Event Hubs Data Receiver Role to the Splunk Service Principal

Before we assign the role Event Hubs Data Receiver role to the Splunk-log-collector Service Principal, let us quickly recap to make sure you guys still have it setup in Azure and configured in Splunk. This way we can get it connected to the new Splunk Add-on we will be using.

To recap, the Splunk add-on we used in the first Azure -> Splunk log pipeline was the Splunk Add-on for Microsoft Azure.

If you do not have Splunk Add-on for Microsoft Cloud Services, please download it by searching for it in 'Find More Apps' section.

Now click on Splunk Add-on for Microsoft Cloud Services. Click Add in the right corner. We now have to fill out the information. Name it what you want. Follow the steps below the "Add Azure App Account" screenshot to know where to get the remaining details for the Azure app account for this add-on.

In the Azure Portal, navigate to the Splunk-log-collector application (essentially the blueprint with all the configuration details for our service principal).

On the overview page, collect the corresponding information both Application Id and Tenant ID. The secret key which you should have safely stored away somewhere on your host during our creation of the service principal in the initial log pipeline. If you don't have a service principal for the Splunk-Log-Collector or have lost your secret (Secret is only showed once after creation) that means you will have to create a new Splunk-log-collector service principal. Please refer to the link below:https://www.thesocspot.com/post/entra-id-attack-defense-building-an-azure-splunk-log-pipeline

Once you fill out the form with the gathered info click add, and then it should be present here.

Now let's do what we set out to do in this section grant the splunk-log-collector Splunk service principal the ability to read from the Event Hub.

Steps

Go to the Event Hub Namespace (not the single hub). Our graphs shouldn't be flatlined now.

Access Control (IAM) → + Add → Add role assignment

Select the Role: Azure Event Hubs Data Receiver

Assign access to our SP splunk-log-collector and then Review + assign

Configure Splunk to Ingest the Event Hubs Data by creating an input

We now need to pivot to Splunk to perform the final part of the setup.

Let u start by creating a new index 'entra_eventhub' leave everything else default.

Set up the Splunk Add-on for Microsoft Cloud Services to read logs from Event Hub. We do this by creating a new input. We already ensured that the splunk-log-collector account was present in the add-on.

Therefore, we now configure Inputs. Inputs → Create New Input → Azure Event Hub

Get the necessary information by navigating to your event hub and section overview.

And then plug it into the input parameters.

###troubleshooting!!!

...Ok , lol so it's funny ..... It has been 2 hours since I took that screenshot above. I was troubleshooting because after the above config was completed I should have been getting logs ingested to our index, but I wasn't. I checked the Data Explorer in Events hub and logs were being ingested to the event hub but not one single event streamed into Splunk.

I checked Service Principal Sign-ins, and the app was successfully authenticating to the Event hub.

I tried generating logs by using Graph Explorer.

And it would show up in Data Explorer in the Event hub, but I was getting absolutely no events in my Splunk entra_eventhub index.

###Solution

However, I started to dig into the docs for the Splunk Add-on for Microsoft Cloud Services (https://splunk.github.io/splunk-add-on-for-microsoft-cloud-services/Configureeventhubs/) and found this piece of information. The consumer_group parameter must match the Azure Event Hub Consumer group.

Well, in Splunk we had set the consumer_group for our Entra_eventhub input to splunk but what was the consumer group in Azure for our Entra Goat event hub?

Did you notice here on the page below it is $Default?

I missed that! Not sure why I thought it was arbitrary. Which is why I named the consumer_group in the input parameters in Entra_eventhub input in Splunk as splunk.

According to Microsoft Docs, a Consumer group is, "an independent view of the event stream. Multiple consumer groups can read the same event hub simultaneously, each tracking their own position."

This means that each consumer group tracks:

its own position in the stream

its own offsets (per partition)

its own consumers

This allows Azure to say, at the same time:

“Splunk has read up to event X”

“Another tool has only read up to event Y”

When Splunk connects to an Event Hub, it effectively says:

“I am a consumer in this consumer group.”

Azure then responds with the current offset for that group and only delivers events after that point.

The root cause of my issue was this:

If the consumer group does not exist, nothing is read

No error is thrown

Authentication still succeeds but ingestion is silent

Therefore always remember that the consumer group configured in Splunk must exactly match an existing consumer group on the Event Hub.

Going to Entity in our event hub namespace

Selecting EntraGoat takes us to the EntraGoat eventhub in the EntraGoat Eventhub Namespace.

From there we click Entities -> Consumer Groups, we see that consumer group being used to read the event stream is $Default, not Splunk.

So let's change the consumer group to match that of entragoat Event Hub.

Let's try to generate some logs and see if it works now.

Sign in and click on of the requests on the left and then run.

Go back to index page in Splunk now.

And Boom! They're rolling in!

I think we are good to go now regarding scenario 2. We now have the logs we were missing coming in.

After reading the doc, I think we may make one final change. We will ingest all logs via the new add-on and disable via the old add-on that we did in the part 1 of this series.

Go back to the Diagnostic Setting -> Edit settings-> Check the boxes to also send SignInLogs, AuditLogs, and Graph logs to the EventHub.

Why this matters:

I know initially I said we were going to continue ingesting:

Interactive sign-ins and audit logs via the legacy Azure / Graph REST inputs, and

Non-interactive, service principal, and Graph activity logs via Event Hub.

While this works, when I look at the logs it introduces schema inconsistencies. The same logical events arrive with:

different field names,

different nesting (properties.* vs flattened fields),

and different normalization assumptions.

That makes correlation, detection logic, and reuse of queries more fragile.

Additionally, the documentation had a section on the updates to the schema from the Splunk Add-on for Microsoft Azure -> Splunk Add-on for Microsoft Cloud Services. There were quite a few fields that changed. It would be very inefficient to try to normalize these when an easier alternative is just taking everything in via the Diagnostic setting + event hub.

By sending all Entra logs through Event Hub, we:

unify the ingestion path,

standardize schemas across sign-ins and audit events,

avoid gaps between “interactive” and “non-interactive” activity,

and simplify detection engineering for the attack paths in this lab.

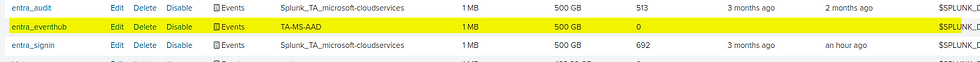

In Splunk Go to Apps -> Splunk Add-on for Microsoft Azure -> Inputs

Disable the two inputs we created for sign-ins and audit logs via the older Splunk add-on.

They should now be coming through the event hub now that we updated the diagnostic settings.

One final note, it took very long for the Microsoft Graph Activity Logs to start coming in. What I ended up doing was de-selecting it from my original Diagnostic setting and creating a separate diagnostic setting to send the graph activity logs to the same event hub.

We are now set to move forward with Scenario 2 of the Entra ID Attack & Defense series.

Comments